Kubernetes is a hot technology now and almost all the companies are moving towards Kubernetes. Because of this rapid movement, the need of Kubernetes knowledge became an important aspect for the engineers. Currently there is a huge demand for Kubernetes professionals – Administrators, DevOps engineers, Application Developers, Support Engineers, Security professionals etc.

I am writing this article as a quick reference for all the engineers who are new to Kubernetes. Especially for support engineers (KTLO engineers) who monitors the environment. There are so many documents and text books about Kubernetes. I am attempting to write a short reference book for new engineers to rapidly gain the knowledge to operate and manage Kubernetes. The fundamentals are explained briefly to understand the entire framework within a short period of time. I will keep enhancing this handbook to make it more useful.

I have written another article as a quick reference to all the frequently used Kubernetes commands – Kubernetes Cheat Sheet.

Prerequisites

Basic knowledge of Software systems, Linux, Shell scripting, Docker, Python (useful)

Contents

- Introduction

- History of Kubernetes

- Architecture of Kubernetes

- Basic Concepts

- Container Registry

- Control Plane

- Worker Nodes

- Namespace

- PODs

- Deployments

- Services

- Daemon sets

- Volumes

- Persistent Volumes

- Persistent Volume Claim

- Ingress

- Egress

- Ingress Controller

- Managing Secrets

- Basic Commands for reference

- Conclusion

Introduction

Kubernetes is an open source container orchestration platform. It simplifies the application deployment, scaling, networking and management. With Kubernetes applications can be deployed, upgraded, scaled up or down without any downtime. Also it became very easy for the application developers to maintain the deployments without much complexity. We do not need additional man power for application deployment. Everything can be managed by GitOps.

Kubernetes is also known as K8s.

Kubernetes is a distributed system. So the application deployments became fault tolerant. The deployments gets distributed among Kubernetes cluster nodes and hence the failure of a cluster node will not affect the application. Also we can scale up or down the application by simply adjusting the replicas. The scaling up or down can be managed in few seconds.

Application upgrades became very easy. We can upgrade or rollback seamlessly without any downtime. The engineers doesn’t need to worry about the complexities associated with upgrades and rollback process. Kubernetes manages it very easily.

Resource management because very easy. It is very easy to allocate resources (CPU, Memory, Storage) to an application and control its usage through Kubernetes.

Now Kubernetes is available as a managed service from popular Cloud service providers such as Google, Amazon and Azure. So we don’t have to worry about setting up the infrastructure and installing Kubernetes. We can completely focus on the application development.

Overall Kubernetes speeds up the application development.

History of Kubernetes

Kubernetes was founded by a group of Google Engineers in the year of 2014. The founders include the engineers Joe Beda, Brendan Burns, Craig McLuckie, Brian Grant and Tim Hockin.

Kubernetes is the Greek word for Helmsman or Pilot or Governer. The Kubernetes project was evolved from one of the Google’s system called Borg.

Architecture of Kubernetes

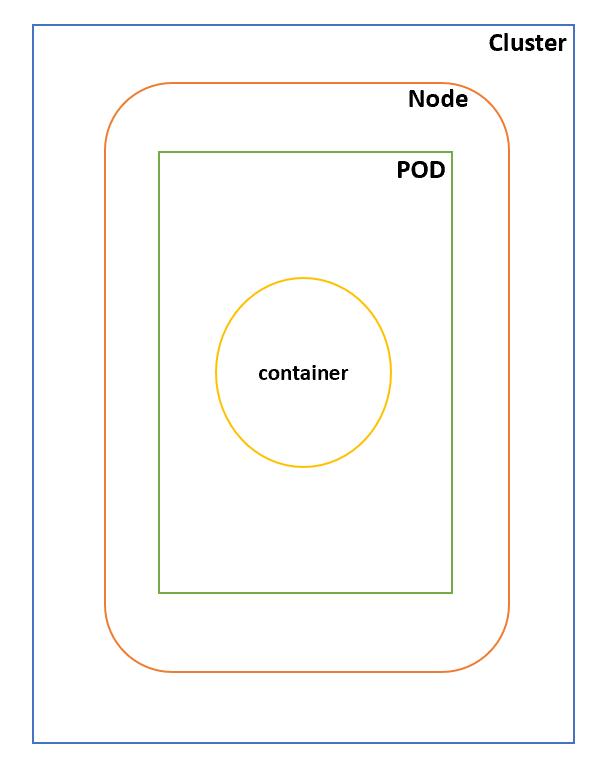

The above diagram shows the simple architecture of a Kubernetes cluster.

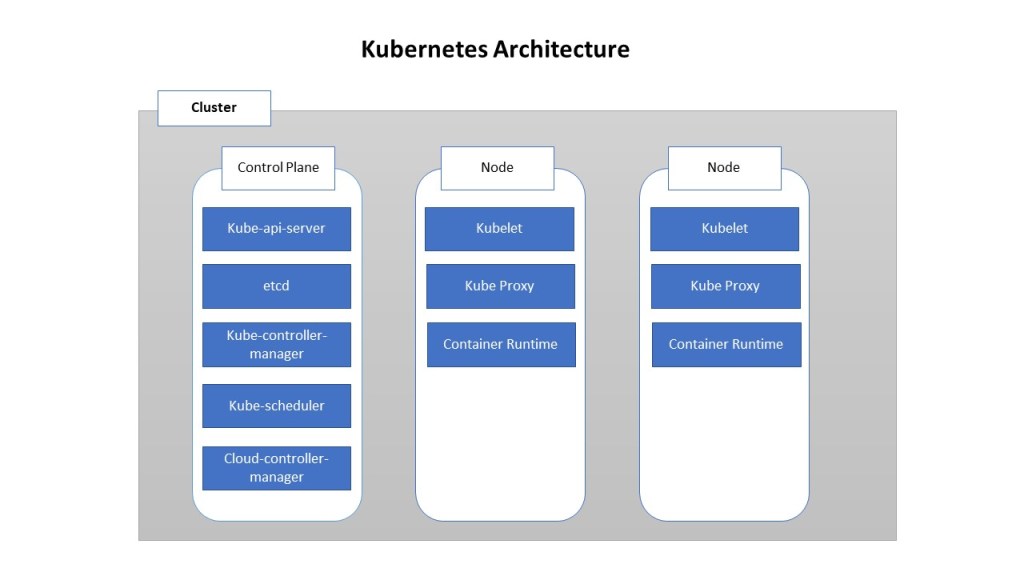

Kubernetes cluster has master nodes and worker nodes. There should be at least one master node and one or more worker nodes. For high availability of the cluster, we may have to add additional master nodes.

Kubernetes cluster is highly scalable. The cluster capacity can be scaled horizontally by adding more worker nodes to the cluster. Adding and removing worker nodes is very easy and can be performed at any point of time.

The deployed applications will run on the worker nodes and the overall management operation of the cluster is performed by the master nodes.

In the diagram shown above, we have one master node and two worker nodes. Now let us discuss the various components that are part of master nodes and worker nodes in Kubernetes.

Master node or Control Plane

This is the heart of the Kubernetes cluster. This node stores the information of the entire cluster. Manages and schedules the deployments happening across the cluster. There can be one or more control planes in a Kubernetes cluster. More than one control planes are configured for high availability and failover.

The various components in the master node or control plane are explained below.

- Kube API Server – This is the daemon responsible for handling the API communications with the control plane. All the requests from the Kubernetes clients reaches the API server. An popular example of Kubernetes client is kubectl.

- etcd – Etcd is a scalable distributed key-value pair framework. This component has a critical role in the Kubernetes cluster. It stores all the information of the cluster. Because of this reason, the data in etcd is very important and we need to ensure that etcd is fault tolerant.

- Kube Controller Manager – This is a controller daemon that embeds the core control loops that comes as part of the Kubernetes system. The main role of controller is to maintain the state of the cluster. Some of the controllers that come as part of Kubernetes are replication controller, endpoints controller, namespace controller, and service accounts controller.

- Kube Scheduler – The scheduler assigns a task to specific worker nodes based on the availability of resources (CPU, memory, disk) and task requirement. This daemon handles the task scheduling job in Kubernetes.

- Cloud Controller Manager – This components helps us in integrating Kubernetes with various cloud service providers such as Google Kubernetes Engine, AWS Elastic Kubernetes Service API.

Worker node or Node

This is the node in which the applications gets deployed. The control plane sends instructions to these nodes and manages the deployments and workloads.

Kubelet – This daemon acts as the gateway between Kubernetes Control Plane and every node of the cluster. All the communications from the control plan passes through the kubelet. This daemon also interacts with the central data store etcd to keep information in sync.

Kube Proxy – This daemon is responsible for managing the network rules in every node of Kubernetes cluster. All the requests to the services deployed in a node will pass through this proxy service. This is a per node proxy service present in the Kubernetes cluster.

Container Runtime – This is a critical component in every nodes of a Kubernetes cluster. Kubernetes is a container orchestration platform. So every nodes in the cluster needs a container runtime to run the applications. The popular container runtimes are Docker, rkt, cri-o, containerd etc.

Namespaces

A Kubernetes cluster can be virtually divided into small virtual environments known as namespaces. Namespaces provide a mechanism to isolate the cluster resources based on users or applications. Namespaces are a way to divide cluster resources between multiple users or applications. We can define resource quotas for each namespace. In this way we can ensure that the Kubernetes objects within a specific namespace will not exceed beyond the allocated limit and it won’t disturb other users or namespaces.

Command to list namespaces within a kubernetes cluster

kubectl get namespace

Command to create a namespace

kubectl create namespace [namespace-name]

Command to delete a namespace and resources within the namespace.

kubectl delete namespace [namespace-name]

Kubernetes Objects

As per the official Kubernetes documentation – “Objects are persistent entities in the Kubernetes system. Kubernetes uses these entities to represent the state of your cluster. Specifically, they can describe

- what containerized applications are running

- The resources available to them

- The policies around their behavior such as restart policies, upgrades, and fault-tolerance

Basically the end users don’t have to worry about the underlying nodes in which the application or workloads are deployed. Kubernetes will take care of the deployment, resource allocation, their life cycle, policies and health of the deployed workloads. Each of the entities in the deployed workloads are Objects in the Kubernetes.

Pods in Kubernetes

In a Kubernetes cluster, Pods are the smallest deployable computing unit that can be created and managed in Kubernetes.

A Pod can contain one or more containers. But in Kubernetes deployments, we will not be directly interacting with containers. We will be deal with Pods.

Even though we say we can keep multiple containers in a pod, It is always recommended to keep minimum possible number of containers within the pod.

Every pod has an IP Address. The pods will get dynamically created in the cluster nodes. The pods are ephemeral. Kubernetes ensures that the objects that we create in the cluster will stay in its state. It may move the objects across the nodes, but the objects will remain in the cluster. Since pod may move across the cluster nodes, pods will not have any static reference.

A Pod has a lifecycle phases associated with it (Pending, Running, Succeeded, Failed, Unknown). Managing a pod directly is not a good choice. If the cluster node in the Kubernetes cluster fails, all the pods running in that specific node will fail and in order to keep the application running, we will have to create a new pod in a healthy node. But we don’t have to do it manually. There is a way to handover this responsibility to Kubernetes. This is through workloads. We will discuss about workloads in the next part

In order to access a pod, we need to expose it outside. The mechanism to expose the pods accessible outside is known as Kubernetes services.

Use the following command to list the pods in a Kubernetes cluster

kubectl get pods

By default it will list all the pods running in the default namespace. If you want to see all the pods running across all namespaces, use the following command

kubectl get pods --all-namespaces

To list the pods running in a specific namespace, use the following command

kubectl get pods -n [namespace-name]

Workloads in Kubernetes

As already discussed, the smallest deployable unit in a Kubernetes cluster is Pod. A Pod can have one or more containers inside it.

A Workload is an application or job that we deploy within Kubernetes. A typical application may have one or more logical components. These components are defined using pods and the combined application or job is called as a workload.

Instead of the end users micro-managing the pods directly, we can create workload resources to manage a set of pods based on the policy that we define. Based on our rules, the Kubernetes controllers ensure that specific number of pods with the specified memory, CPU and disk are running within the Kubernetes cluster in the desired state. In this way, we do not have to worry about our workload in case of any node failures or partial health issues in the cluster.

The following are the workload resources available in Kubernetes.

- StatefulSet – In this case, the pods retain a state. The data in the pods may be stored in persistent volumes. We can add more pods that maintains the same state to handle fault tolerance. An example for StatefulSet is a database deployed on K8 cluster.

- DaemonSet – These are pods that provide node local facilities. Like some plugins or some side car pod that gets attached to the cluster nodes.

- Deployment and ReplicaSet – This is good for managing stateless workloads within the K8 cluster. The pods can be deployed in any cluster node based on resource availability or health of the system. The pod does not maintain any state. So deploying or interchanging pods will not affect the workloads. An example for deployment is a python flask API.

- Job and CronJob – Jobs are tasks that runs and completes. After the completion, jobs will stop its execution. A Job that runs on specific schedule is known as CronJob.

Services in Kubernetes

Service is a mechanism to expose a workload running on a set of pods inside Kubernetes as a network service. The pods may get created, destroyed or relocated within a cluster. A Service defines a route to access the application running inside the underlying pods. So user does not have to worry about where the pod is running within the cluster and can access it directly by using the static service endpoint.

Also there can be multiple replicas of the same pod. A Service will load balance the requests between these underlying pods.

Volumes

The disk in the container are ephemeral. This means if you stop and start a container or if the pod gets redeployed to another node, the contents that are locally present in the container will get destroyed. In order to store data permanently, we need to use volumes in Kubernetes.

Persistent Volumes ( PV )

Just like the nodes in Kubernetes cluster, Persistent Volume ( PV )is a unit of storage provisioned by the administrator or dynamically for using it in the Kubernetes workloads. Assume PV like the disk in our system.

The Persistent Volumes lifecycle is not dependent on the Pod life cycle. Pods requests storage allocation from the persistent volume through claims. But Pod does not have control on the lifecycle of persistent volumes.

The internal implementation of Persistent Volume can be NFS, iSCSI, AWS EBS, AWS EFS, Azure File System or any cloud specific storage system.

Persistent Volume Claim ( PVC )

A Persistent Volume Claim is a request for storage. Persistent Volume Claim gets storage allocations from Persistent Volumes. Persistent Volume Claims life cycle is associated with the Pod life cycle.

The relationship between Persistent Volume Claim and Persistent Volume is similar to the relationship between a Pod and node. A pod uses the node resources (CPU & Memory) to run. Similarly a PVC uses the resources (storage) from PV.

Claims can request specific size and access modes. They can be mounted ReadWriteMany, ReadOnlyMany or ReadWriteOnce.

Container Registry

Container registry is the repository used to save container images. We can relate Container registry with version control systems like Git or artifact repositories like maven registry.

The container images will be stored with its versions (tags) and the container runtime or orchestration systems like docker, Kubernetes pulls these containers from the registry by using the reference URL and the tag.

Some popular container registries are

- Docker hub

- AWS Elastic Container Registry (ECR)

- Azure Container Registry (ACR)

- Google Container Registry (GCR)

- Red Hat Quay

- Harbor

Config Maps in Kubernetes

Kubernetes provides an easy way to permanently store non sensitive key value pairs in the system and access it through the Kubernetes API. This feature is called Config Map.

Config map does not provide secrecy. So we should not store any confidential information in the config map.

Config map helps us to decouple the loosely coupled elements from the docker images and thus makes the container images generic.

For example, if we have Development and Production environments, there will be environment specific configurations in the application. Config Maps helps us to maintain the environment specific configuration outside the container image. We can store the configurations in the config map and build the application image generic for both the dev and prod environments. At the time of deployment, the image refers to the config map to access the configuration. This will improves the portability of the application.

Secrets in Kubernetes

Secrets are similar to config map. The only difference is about the security. Secrets are used to store sensitive information like Passwords, SSL certificate, Secret Keys, Token etc. This helps us to store the sensitive information separately instead of managing it within the Pod. Secrets can be retrieved through Kubernetes API. The access to the secrets can be controlled by using Kubernetes RBAC

Conclusion

I will keep updating this article with more details. I hope this blog will help the budding engineers who want to excel their career in Kubernetes.

I have written another article with frequently used commands in Kubernetes – Kubernetes Cheat Sheet. It can be used as a handbook for reference.

Feel free to comment if you have any questions or suggestions. Subscribe the blog for getting frequent updates from the blog.